What ERP Taught Us About AI and What Leaders Have Already Forgotten

In the early 1990s, enterprise resource planning software was going to transform how organisations operated. The technology was ready. The vendors were confident. The business case was compelling. And for the next decade, more than seventy per cent of ERP implementations failed to meet their objectives, with average cost overruns approaching twice the original budget.¹ Not because the software was bad. Because organisations treated a coordination problem as a technology problem, and discovered, expensively, repeatedly, over years, that the difference matters.

Thirty years later, the same pattern is unfolding with artificial intelligence. The technology is further ahead than the organisations attempting to absorb it. The gap between technical capability and organisational readiness is widening, not narrowing. And the leaders best positioned to recognise what is happening are the ones who lived through ERP and have not yet connected the parallels. The fog of hype around ERP has long since lifted. What remains is a well-documented record of how organisations adopt general-purpose technology that touches every function, and how consistently they underestimate what that requires.

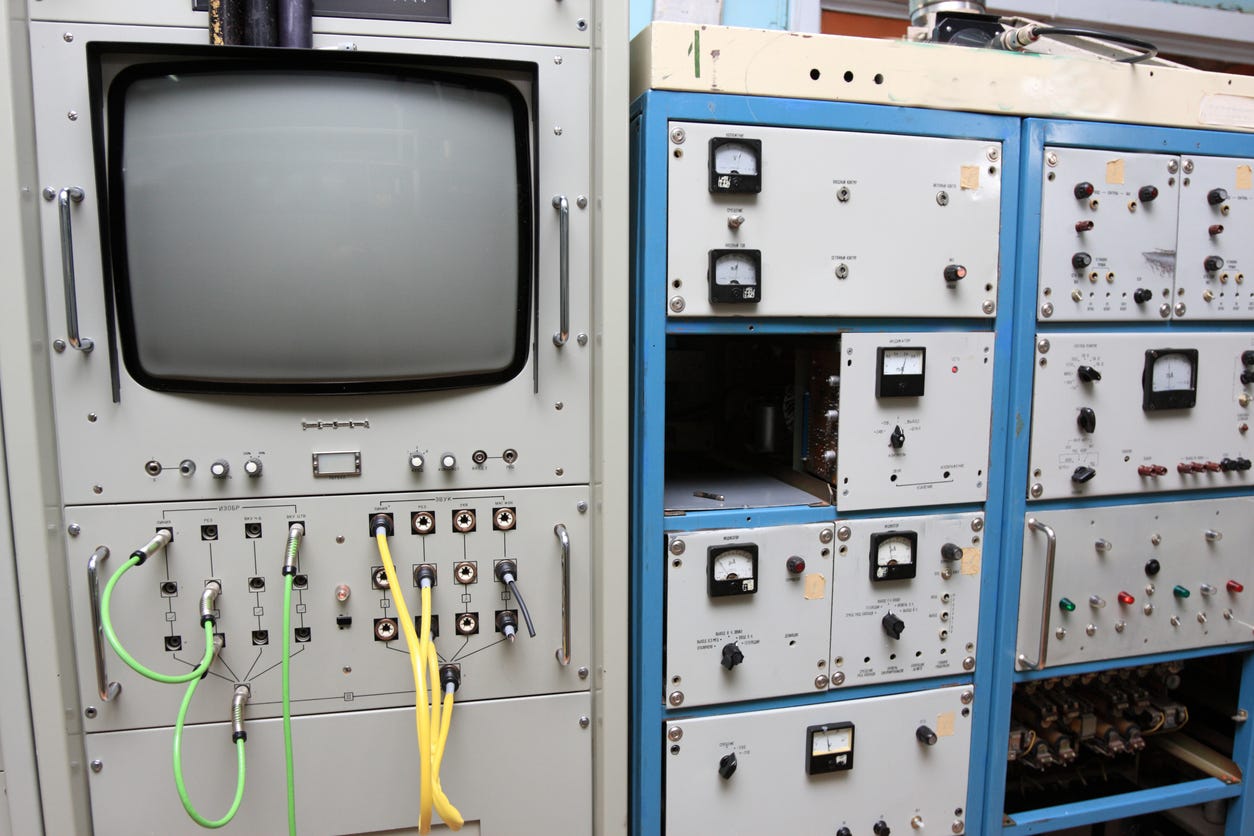

ERP systems promised a single integrated platform across finance, operations, HR, procurement, and supply chain. The technology delivered on that promise. What it required in return was something vendors mentioned in passing and organisations discovered in practice: every function had to agree on shared processes, shared data definitions, shared workflows, and shared accountability. A finance team that had always owned its own reporting structure now needed to reconcile that structure with operations. A procurement function that had built its processes around local flexibility now needed to standardise across the enterprise. An HR team that had never shared data with supply chain now needed to operate on a common platform with common rules.

Each of these was a coordination problem, not a technical one. And each was owned by a different role, with different incentives, different priorities, and a different definition of what a successful implementation would look like.

The result was predictable in hindsight and painful in practice. Implementations budgeted for eighteen months took three to five years. Projects scoped at one cost came in at two or three times the estimate. Hershey’s $112 million ERP deployment cut testing phases to meet an aggressive deadline and failed on launch, with transactions unable to flow across its CRM, ERP, and supply chain systems.² FoxMeyer, a major pharmaceutical distributor, saw its ERP implementation contribute to the company’s bankruptcy.³ These were not small companies making amateur mistakes. They were sophisticated organisations undone by the same structural problem: the technology worked, but the functions responsible for making it work could not align fast enough to make it stick.

The organisations that succeeded did something specific. They sequenced. They identified which functions were ready to move first, let those functions stabilise, and used the evidence from early adopters to build the case for follow-on functions. They accepted that different roles would move at different speeds and treated lag as a structural feature rather than a failure of commitment. They measured alignment across functions before committing capital to the next phase. And they treated timing as a decision variable, understanding that moving too early in a function that was not ready carried costs that compounded across the entire programme.

The organisations that failed did the opposite. They set enterprise-wide deadlines. They mandated simultaneous adoption. They confused executive ambition with organisational readiness. And they spent years unwinding the consequences.

The parallels with AI adoption are not approximate. They are structural.

AI, like ERP, is a general-purpose capability that touches every function. Marketing uses it differently from engineering. Finance evaluates it against different criteria than operations. The CEO must reconcile perspectives from roles that are experiencing different realities, operating under different constraints, and reaching different conclusions from the same evidence.

AI, like ERP, is being sold on a timeline that reflects the technology’s readiness, not the organisation’s. Vendors promise rapid deployment. Pilots show quick wins. The pressure to scale is intense. But the organisational coordination required to move from a successful pilot to a durable, cross-functional capability operates on a fundamentally different timescale, and no amount of urgency changes that.

AI, like ERP, produces its sharpest failures not when the technology breaks but when alignment breaks. Ninety-five per cent of enterprise generative AI pilots fail to deliver measurable financial returns.⁴ According to IDC, for every thirty-three prototypes a company builds, four reach production.⁵ Nearly two-thirds of organisations remain stuck in the pilot stage. According to S&P Global Market Intelligence, forty-two per cent of companies abandoned most of their AI initiatives in 2025, more than double the previous year’s rate.⁶ BCG’s widely cited research puts the ratio at ten per cent algorithms, twenty per cent technology and data, seventy per cent people, processes, and cultural change.⁷ The dominant blockers are not technical. They are misalignment on value, risk, ownership, and timing across the roles responsible for making it work.

And AI, like ERP, is being treated as a deployment problem when it is a coordination problem. The difference is not semantic. A deployment problem responds to better tools, more investment, faster timelines. A coordination problem responds to sequencing, alignment, and the patient accumulation of shared conviction across functions. Applying deployment solutions to a coordination problem does not accelerate progress. It widens the gap between the roles that have moved and the roles that have not, which is precisely the gap that stalls everything downstream.

Quaie’s Q1 2026 fieldwork with senior decision-makers is surfacing this dynamic at a level of resolution that the ERP era lacked. When we measure AI adoption readiness across executive roles, the hypothesis is that CTOs will report high confidence and advanced adoption while CMOs report low confidence and early-stage experimentation, with the gap between the most advanced and least advanced roles exceeding every other variable measured, a divergence the Role Shift Index is designed to track over time and across quarters. The Role Alignment Map adds a complementary view: where the Role Shift Index tracks divergence in adoption stage, the Alignment Map tracks whether roles share a common interpretation of AI’s strategic priorities and ownership. In the ERP era, this was the dimension that most consistently separated successful implementations from failed ones, not whether functions had deployed the technology, but whether they had converged on a shared understanding of what it was for and who was responsible for it.

The blocker distribution is likely to tell the same story from a different angle. ROI uncertainty is expected to dominate among CEOs and CMOs. CTOs are more likely to cite integration complexity and security concerns. If that pattern holds, the organisation will not be facing one problem but several, distributed across the people responsible for solving them, with no shared view of where those problems sit relative to each other. The Organisational Adoption Gradient, the distance between the most advanced and least advanced roles, is designed to make this spread visible rather than allowing it to be concealed within an enterprise-level average. The Role Influence Index reveals a further dimension relevant to the ERP parallel: in both eras, the roles with the greatest formal authority over technology decisions were not always the roles with the greatest influence over whether adoption succeeded. Understanding which roles act as catalysts, validators, or gatekeepers, and whether that influence structure is consistent with the coordination the organisation needs, is as important now as it was then.

This is the ERP failure pattern, repeating with different technology and the same organisational dynamics.

The leaders who navigated ERP successfully learned something that most AI adoption frameworks have not yet absorbed: the technology is the easy part. The hard part is getting different roles, with different authorities, different risk tolerances, and different definitions of success, to converge on a shared commitment, and doing so in the right sequence, at the right pace, with the right evidence at each stage.

That lesson cost organisations billions of pounds and a decade of difficult implementation to learn. It is available for free to any leadership team willing to apply it to AI.

The starting point is the same now as it was then. Map readiness by role, not by department. Identify which functions are prepared to move and which are waiting for evidence, governance clarity, or budget justification. Sequence investment so that early movers generate proof that follow-on roles can evaluate, rather than mandating simultaneous adoption that creates friction between functions moving at different speeds. And treat the gap between roles as information, not failure. It tells you where alignment must form before commitment becomes rational. The Role Lead-Lag Ranking makes this sequencing visible, tracking the temporal distance between roles as they move through adoption stages, and revealing whether the organisation is converging toward shared conviction or pulling further apart. Consensus Formation Time estimates how long that convergence is likely to take, giving leaders a forward-looking view of their decision timeline rather than a backward-looking account of what has already been deployed.

The question is whether leaders will apply what the ERP era taught, or whether the lesson will need to be relearned from scratch, expensively, repeatedly, over years, because the urgency of the technology obscured the patience the organisation required.

The technology has changed. The organisational problem has not.

This is the first essay in Quaie’s ongoing research into how organisations decide to adopt AI, role by role, over time.

Notes and Sources

¹ ERP implementation failure rates and cost overruns: Panorama Consulting Group, annual ERP reports (2010–2020). Panorama’s longitudinal research consistently found that 55–75 per cent of ERP implementations failed to meet objectives, with average cost overruns of 59–189 per cent depending on measurement year and methodology. Gartner research on ERP implementation outcomes corroborates this range.

² Hershey’s ERP failure: Hershey Foods implemented a $112 million SAP R/3, Siebel CRM, and Manugistics supply chain system in 1999. The company compressed a 48-month implementation schedule to 30 months to meet a deadline. The system went live in July 1999, the beginning of the peak Halloween ordering season, and failed to process orders correctly. Hershey reported a 19 per cent decline in third-quarter profits. Reported widely; primary coverage in CIO Magazine, Wall Street Journal, and subsequent Harvard Business School case studies.

³ FoxMeyer Drug bankruptcy: FoxMeyer, the fourth-largest pharmaceutical distributor in the United States, filed for bankruptcy in 1996 following a failed SAP R/3 and Delta III warehouse automation implementation. The company’s bankruptcy trustee subsequently sued SAP, Andersen Consulting (now Accenture), and other parties. The case is widely studied in information systems literature as an example of catastrophic ERP failure. See: Scott, J.E. and Vessey, I. (2002), “Managing Risks in Enterprise Systems Implementations,” Communications of the ACM, 45(4).

⁴ 95 per cent of generative AI pilots failing to deliver measurable financial returns: Reported across multiple analyst sources, 2024–2025. Gartner predicted in July 2024 that at least 30 per cent of generative AI projects would be abandoned after proof of concept by the end of 2025 (Gartner Data & Analytics Summit, Sydney, July 2024).

⁵ IDC prototype-to-production ratio: IDC research findings on enterprise AI deployment, cited across industry reporting, 2024–2025. For every 33 AI prototypes built, approximately 4 reached production deployment.

⁶ S&P Global Market Intelligence: 42 per cent of companies abandoned most AI initiatives in 2025. S&P Global Market Intelligence, 451 Research survey, published 2025.

⁷ BCG AI adoption composition: Boston Consulting Group, “From Potential to Profit: Closing the AI Impact Gap” (AI Radar 2025), January 2025. Survey of 1,803 C-level executives across 19 markets. BCG’s related publications cite approximately 70 per cent of AI challenges stemming from people, processes, and cultural change rather than technology.

Quaie’s six analytical constructs referenced in this essay (the Role Shift Index, Organisational Adoption Gradient, Role Alignment Map, Role Influence Index, Role Lead-Lag Ranking, and Consensus Formation Time) are described in full in the forthcoming book The Role Layer: The Missing Intelligence in Enterprise AI Adoption (Quaie Ltd, 2026) and in subsequent essays in this series.